Supervise Less, See More:

Training-free Nuclear Instance Segmentation with Prototype-Guided Prompting

Overview

Given an unlabeled target image and a frozen foundation model, how can we construct reliable instance-level prompts without supervision or parameter updates?

To address this challenge, we propose SPROUT, a fully training-free prompting framework. Our contributions are summarized as follows:

- To the best of our knowledge, SPROUT is the first fully training-free framework for nuclear instance segmentation in H&E pathology images without annotations. We introduce a novel, lightweight, and generalizable self-reference mechanism that overcomes reference-based limitations and bridges domain gaps.

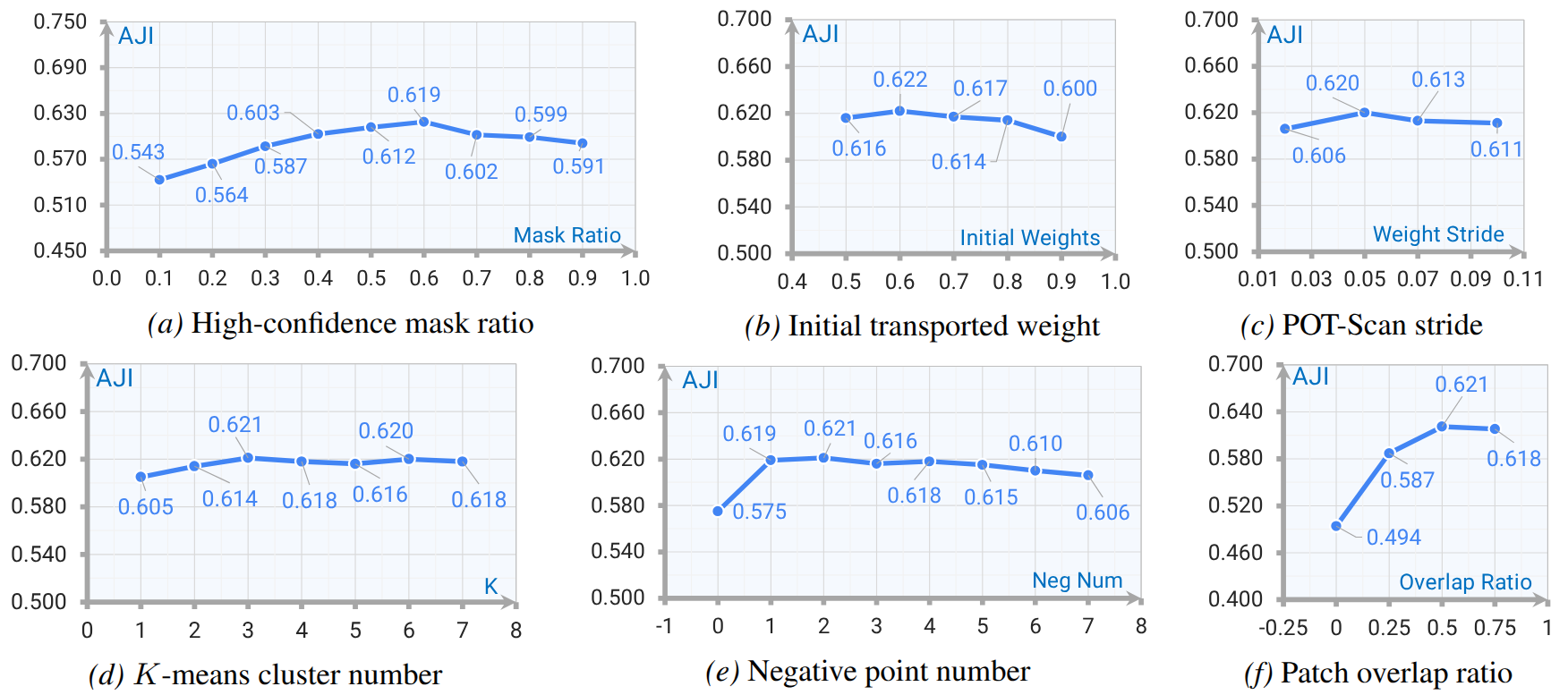

- We propose POT-Scan, a principled scheme with theoretical guarantees that adaptively balances nuclear coverage and noise suppression. Our quantitative and qualitative analyses further elucidate the intrinsic behavior of prompt generation and verify its robust performance under diverse hyperparameter settings.

- Across three challenging benchmarks, SPROUT consistently achieves remarkable performance gains ($+8.2\%$ AJI on MoNuSeg). These results highlight the potential of robust prompt generation and patch-based decomposition to unlock the zero-shot capabilities of vision foundation models in histopathology.

Method

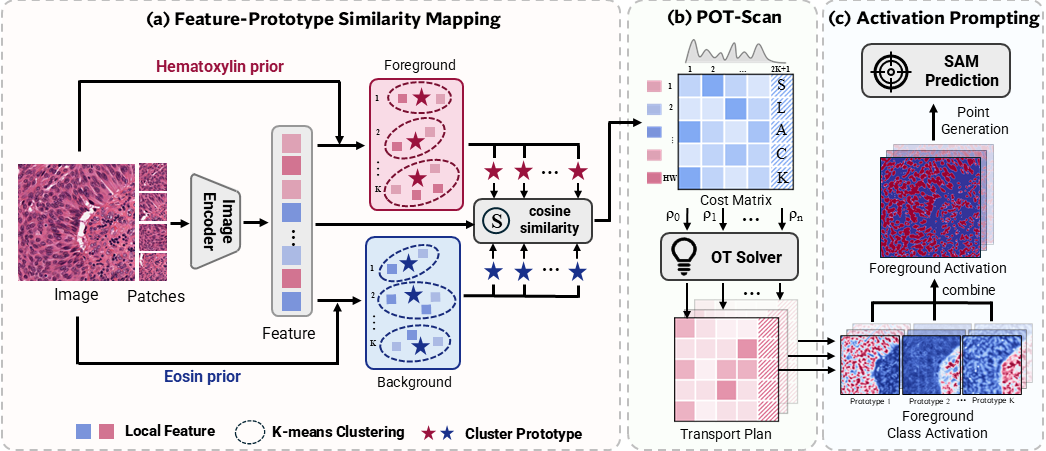

SPROUT consists of three steps:

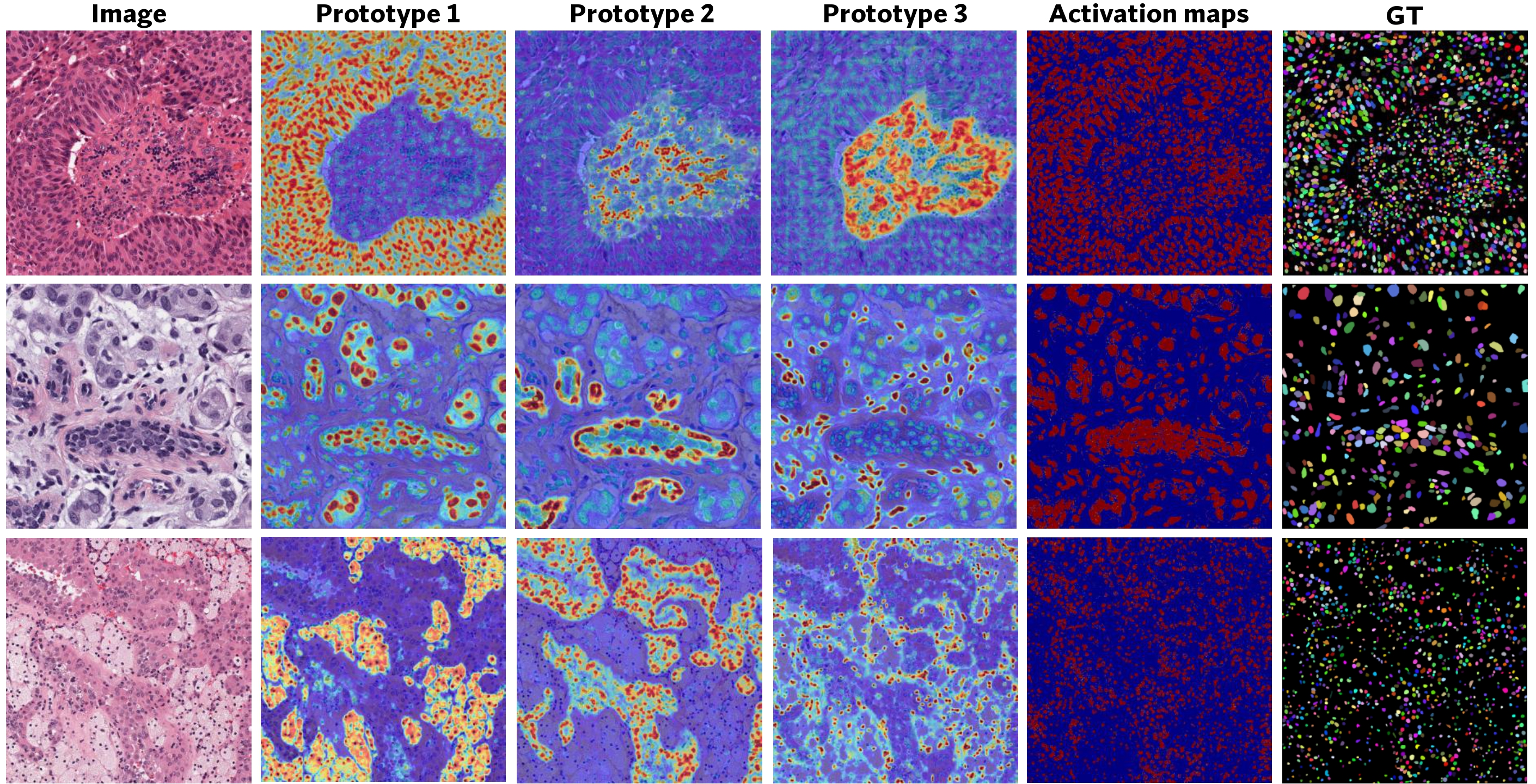

- Feature-prototype similarity mapping: H&E stain priors are used to identify high-confidence foreground and background regions, from which clustering extracts representative prototypes that serve as anchors for similarity matching.

- POT-Scan: a partial optimal transport scheme progressively aligns features to prototypes, filtering ambiguous assignments through partial mass transport.

- Activation prompting: prototype-reweighted activations are aggregated into foreground maps, from which positive and negative point prompts are sampled to guide SAM-based instance prediction.

Results

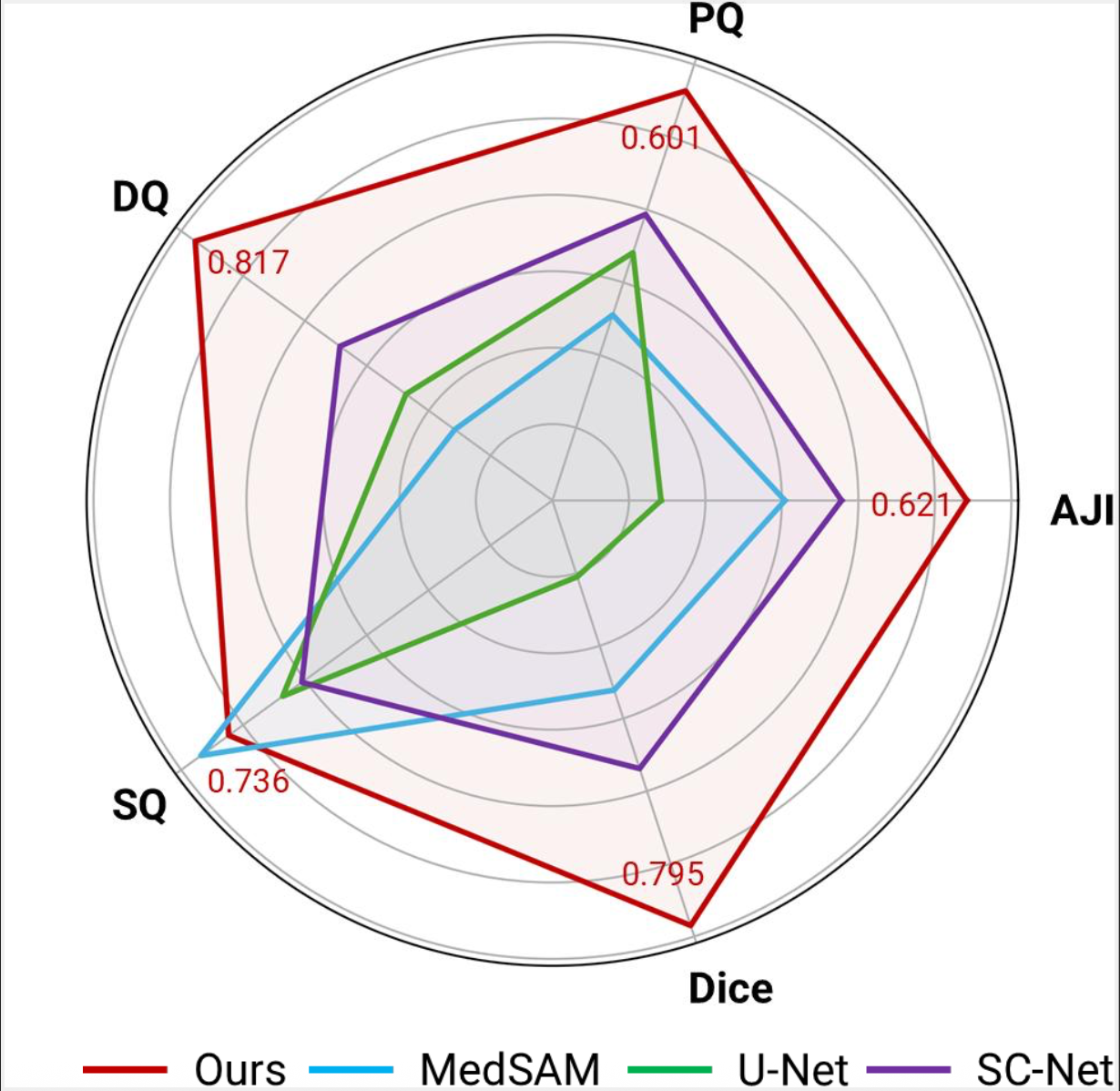

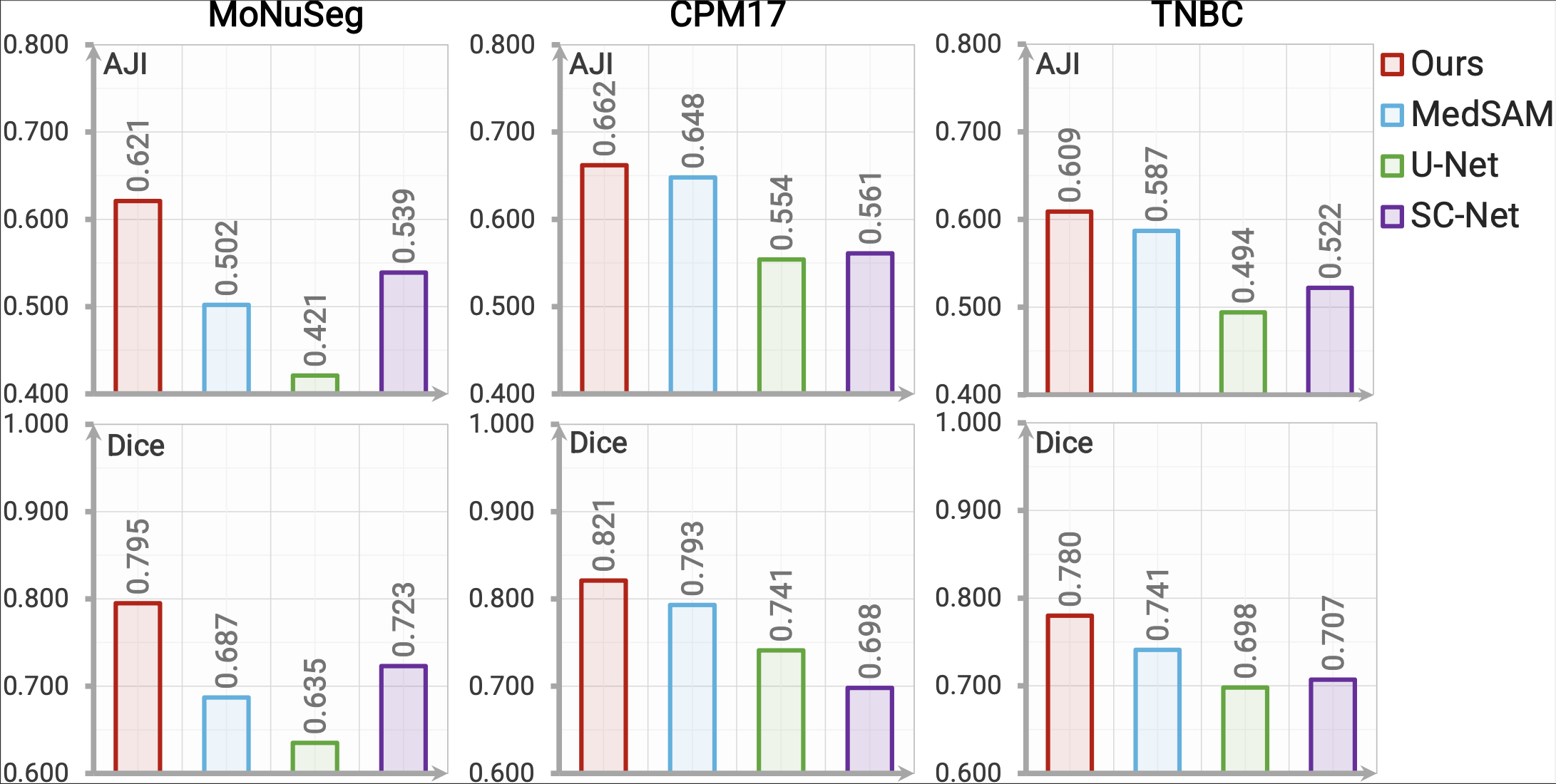

Performance

SPROUT achieves the highest AJI and Dice scores and consistently outperforms all counterparts with up to 8.2% absolute gains in AJI on the challenging MoNuSeg dataset.

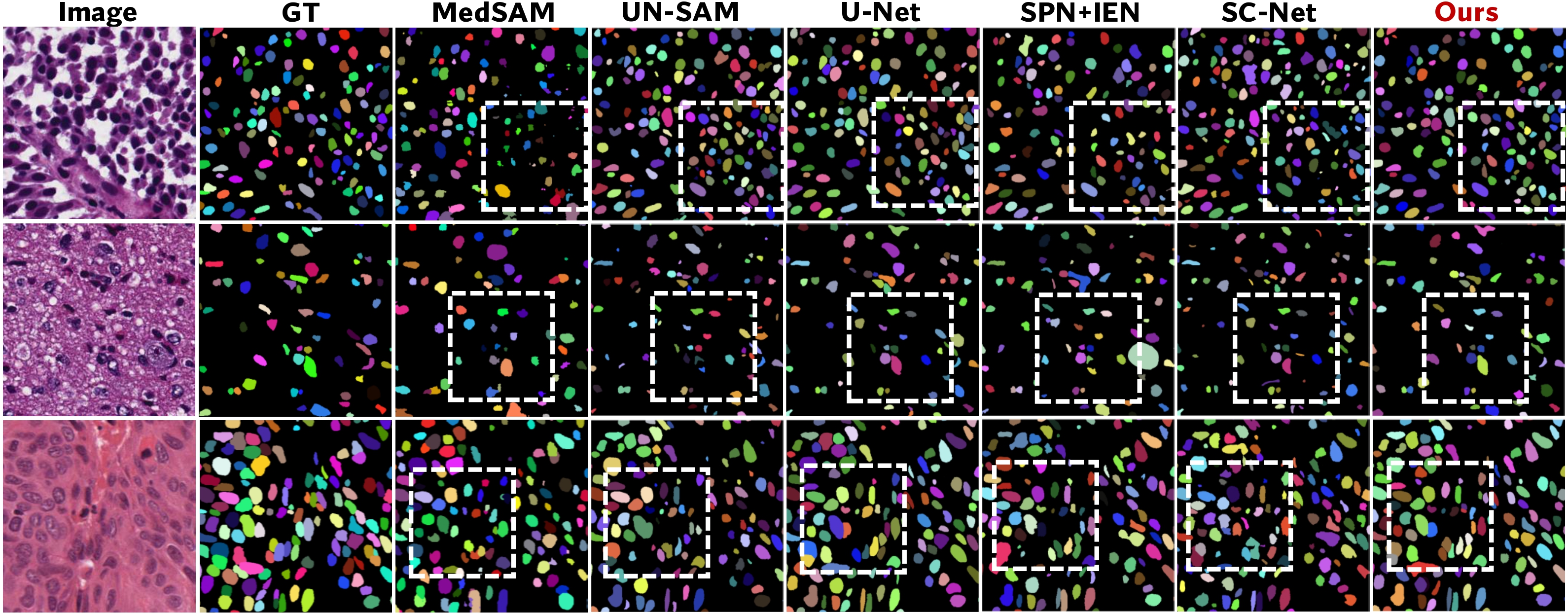

Qualitatively, SPROUT produces clean, non-overlapping masks in challenging cases with nuclei-tissue color similarity or light stain.

Ablation Studies

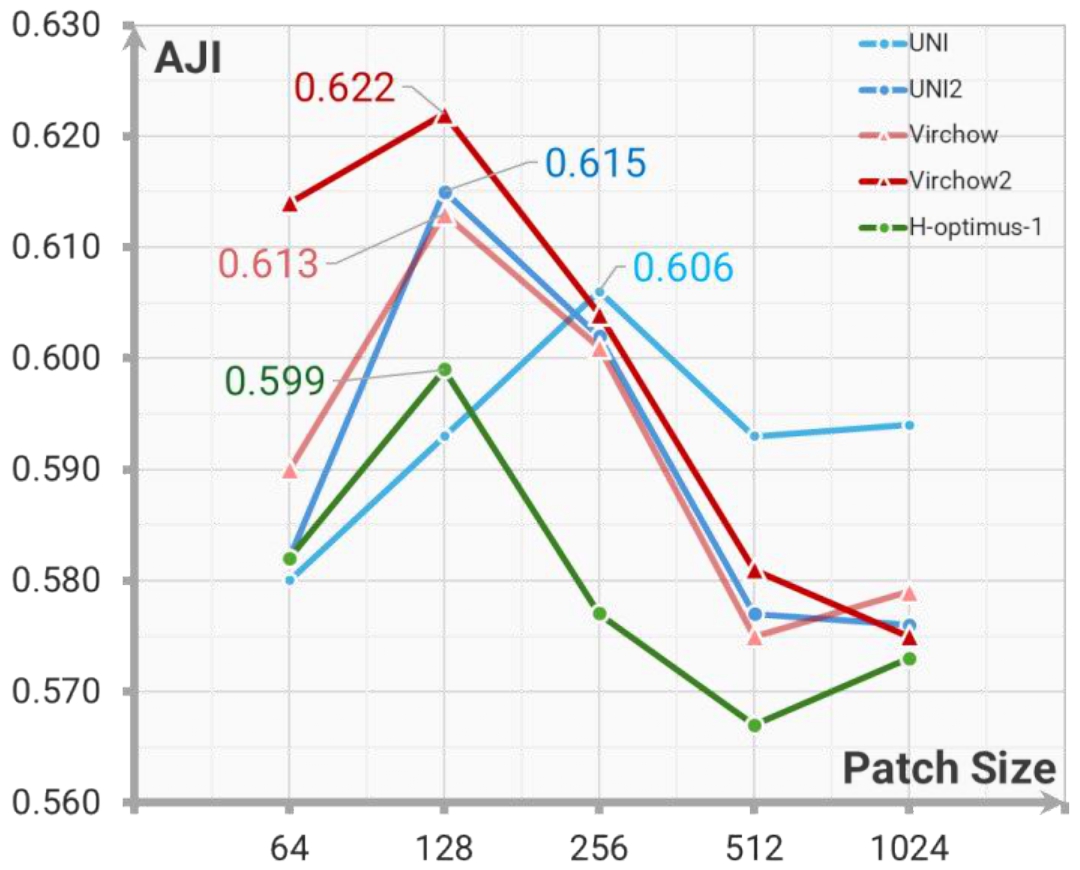

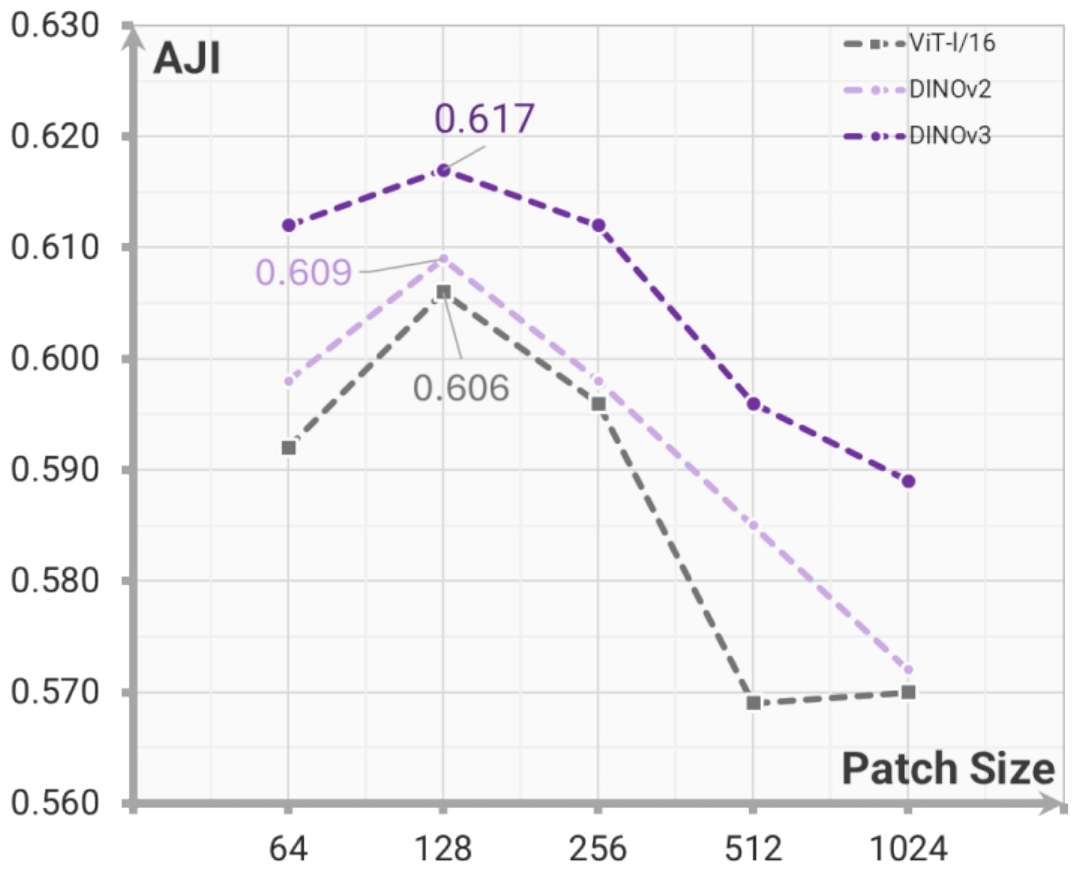

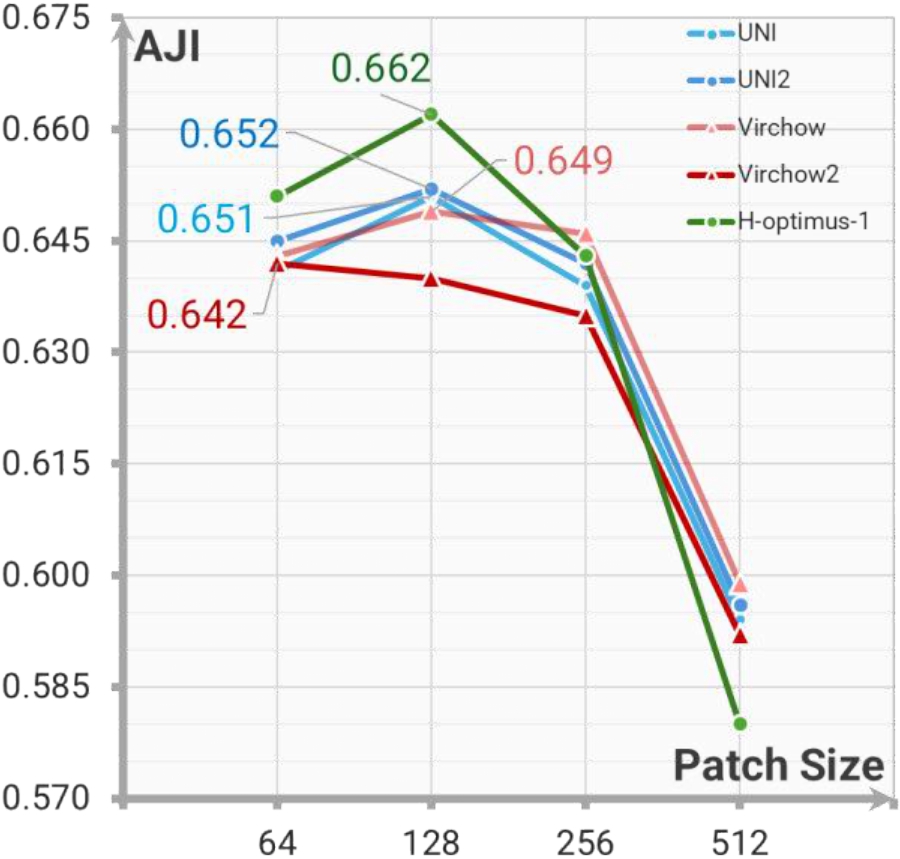

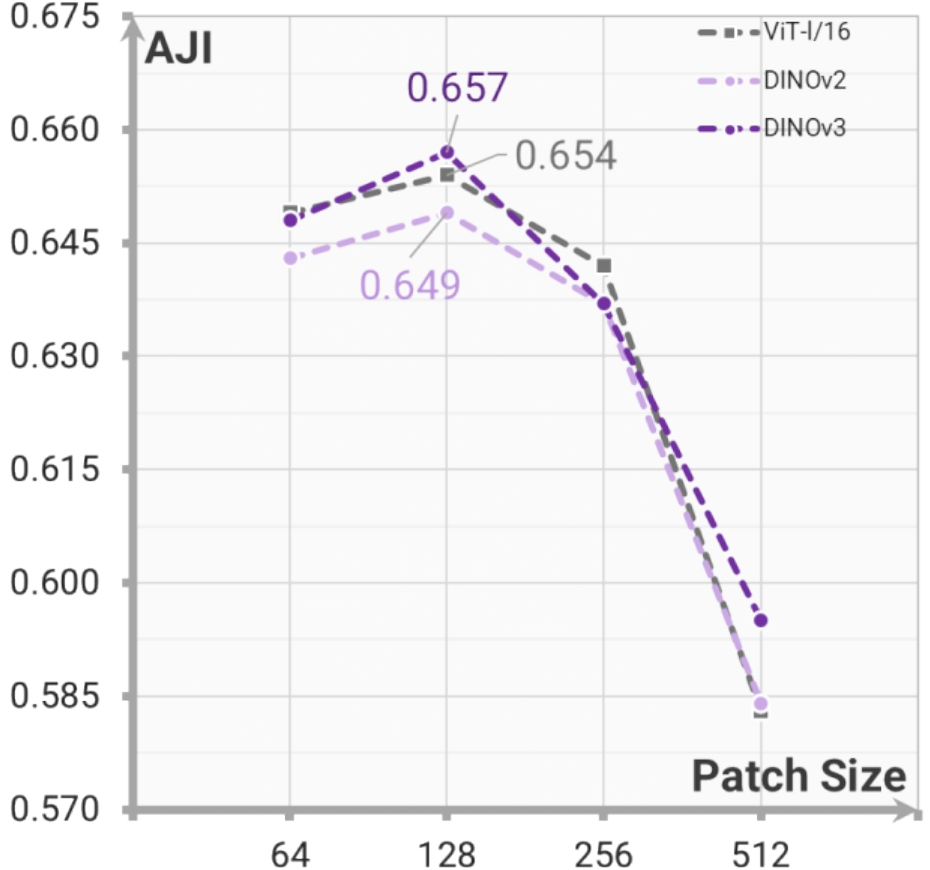

Feature Extractors. To assess the effect of the proposed self-reference mask strategy, we evaluate feature extractors pretrained on both pathology and natural images on the MoNuSeg and CPM17 datasets. The comparable AJI scores achieved by both backbone types validate the effectiveness of the proposed self-reference strategy in a fine-tuning-free setting.

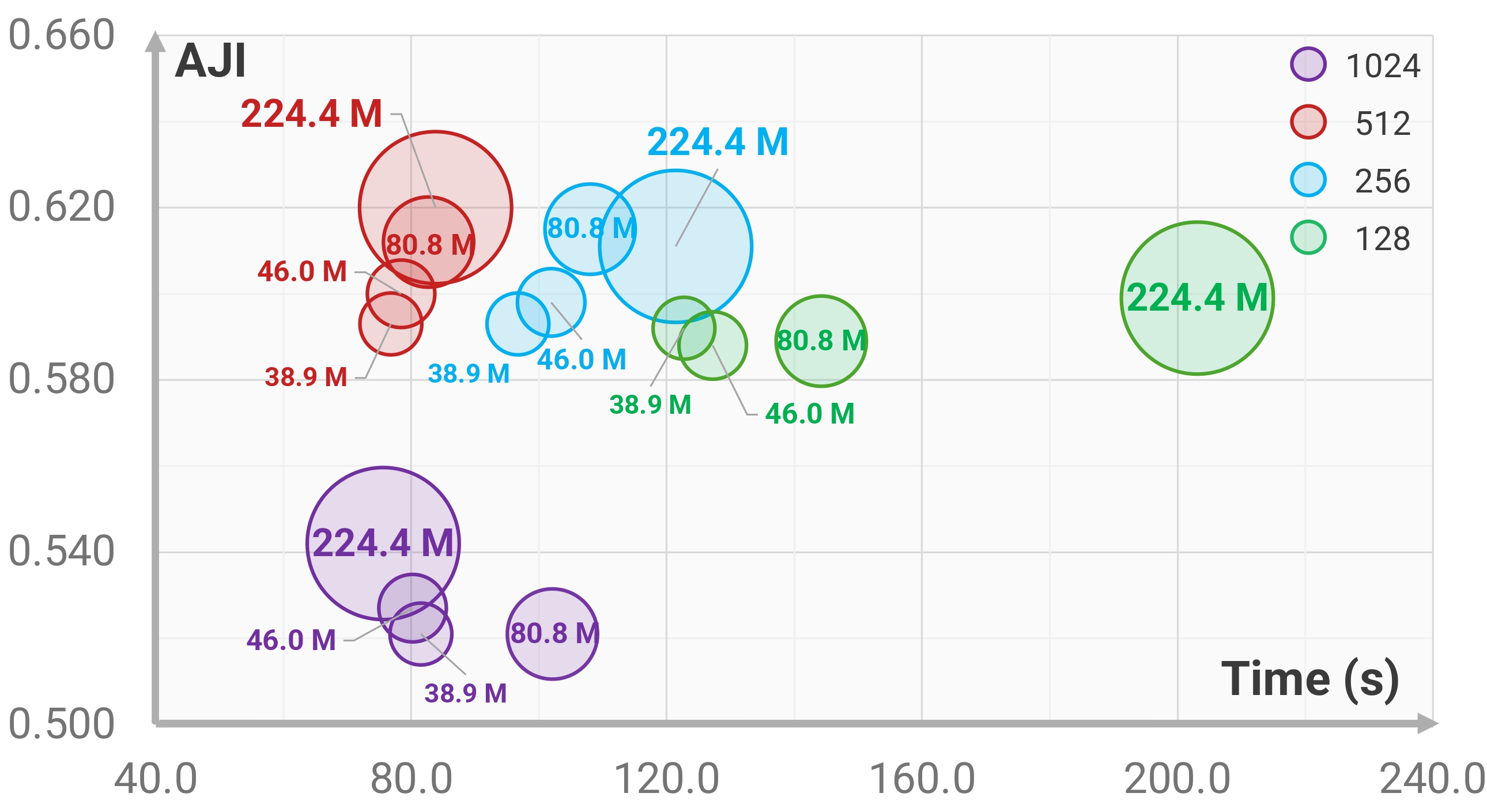

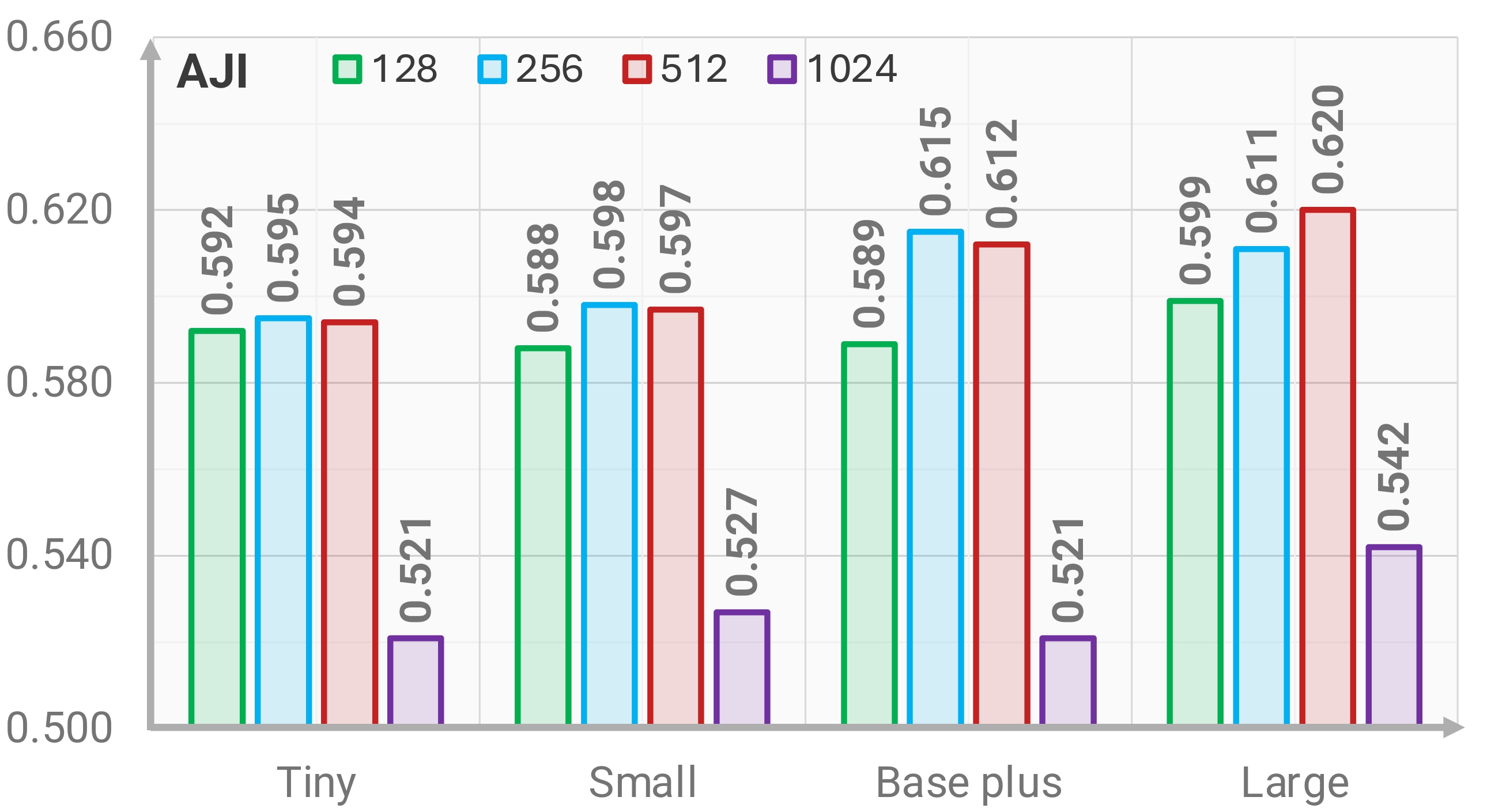

SAM Variants. All SAM variants achieve effective segmentation when paired with appropriate patch sizes, with moderate patching improving AJI by preserving sufficient context while reducing whole-image complexity. Larger models provide the best performance, whereas smaller variants remain competitive and offer practical alternatives for resource-limited computational settings.

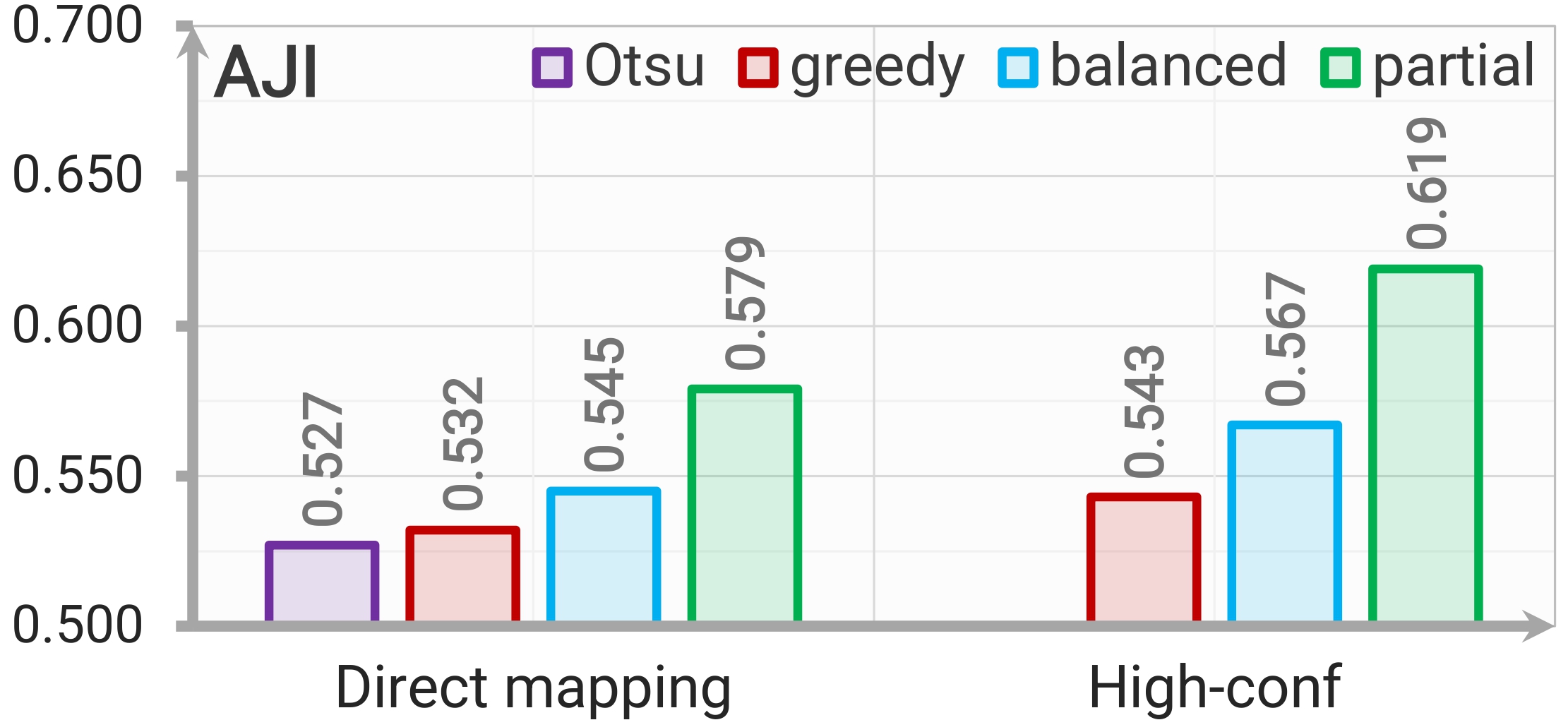

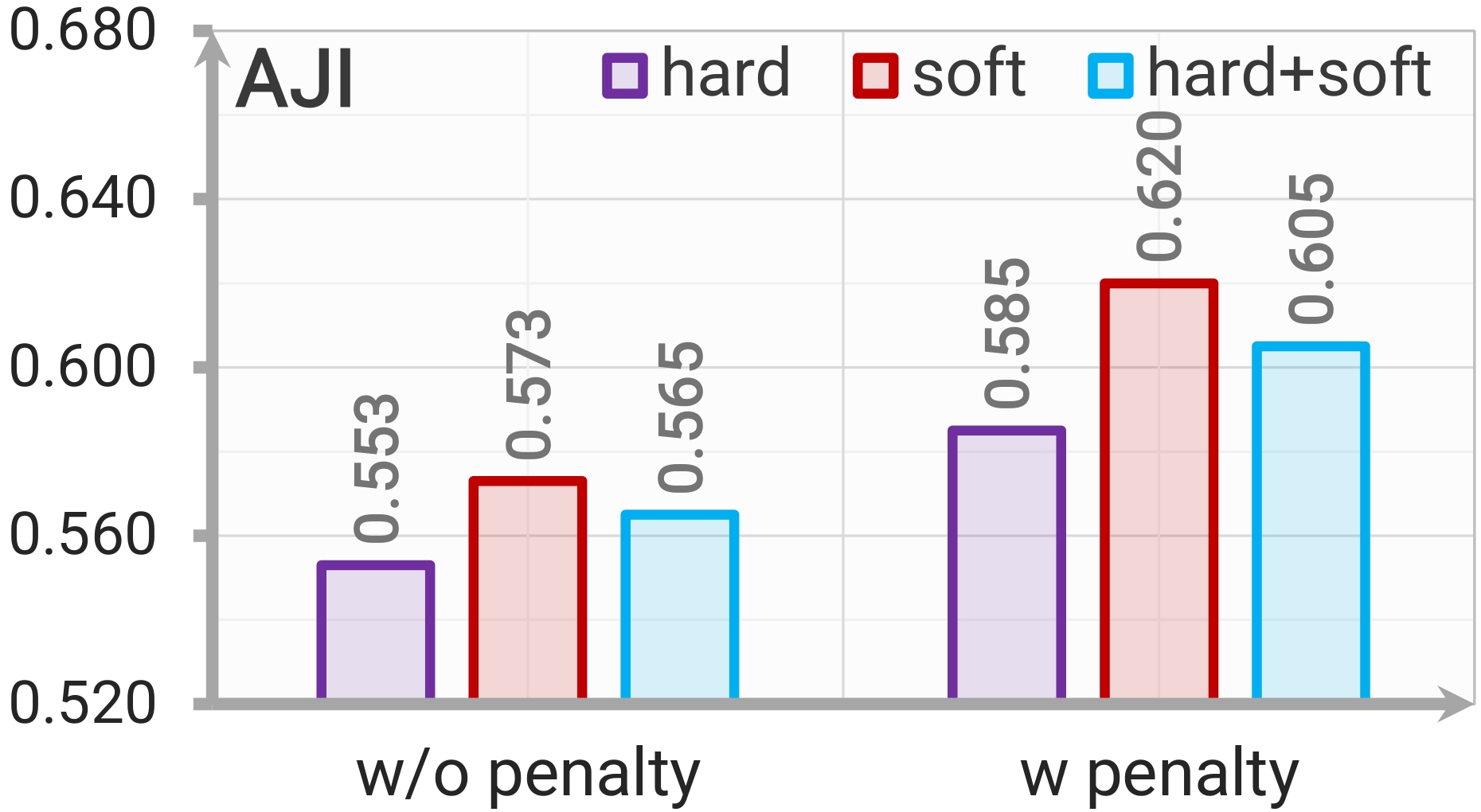

Point Generation and Post-processing. Partial OT generates the most reliable prompts by retaining high-confidence feature-prototype matches while assigning ambiguous regions to slack. The proposed containment-aware soft NMS improves mask refinement by suppressing large multi-nucleus masks while preserving acceptable overlaps in dense nuclear regions.

Class Activation. Each prototype emphasizes distinct morphological patterns, and their combination recovers foreground structures closely aligned with ground truth.

Sensitivity Analysis

Across key hyperparameter settings, SPROUT demonstrates stable point generation and mask prediction performance, with noticeable degradation only under extreme configurations.

Citation

@inproceedings{zhang2025superviselessmoretrainingfree,

title={Supervise Less, See More: Training-free Nuclear Instance Segmentation with Prototype-Guided Prompting},

author={Wen Zhang and Qin Ren and Wenjing Liu and Haibin Ling and Chenyu You},

booktitle={International Conference on Machine Learning},

year={2026}

}